Gmail really wants me to say yes

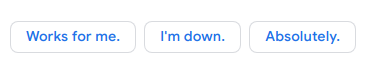

Look what’s at the bottom of my email. A neat little menu allowing me to reply without typing:

Not surprising that they get this wrong.

Maybe whatever fresh-faced grad student built this on their summer internship flubbed the feature engineering, modeling autorespond as a library of independent phrases rather than a library of choices. A lot of ML still comes down to feature engineering i.e. it’s art as much as science.

Or maybe ‘no’ struck someone as too negative and they had to take it out.

If I saw something like this in the 90s I wouldn’t think black mirror, I’d just think ‘this technology doesn’t work yet’. Remember Dr SBAITSO, the text-to-speech engine that came on a single floppy with creative labs sound cards? That was the least disappointing of the technology near-misses we got back then.

Now I think black mirror a little, but occam’s razor still prefers incompetence to malice.

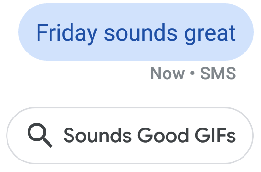

It’s not just autocomplete that doesn’t want me to say no. This is kind of meta because I’ve turned this autocomplete feature off, I’m sure of it. Did I just do it on my phone? Did my wifi blip so the AJAX didn’t work? I certainly never turned it on.

What does settings creep have to do with fortune-cookie autosuggest? Both want me to interact with the world using a limited menu of ‘yes’ options.

Maybe yes forever later

Why am I not more pissed about this?

There’s a scary dystopia where we’re trapped by badly designed prompts that tyrranize us but evade legal responsibility behind the veil of incompetence and shapeshifting EULAs.

With my product hat on it occurs to me that when products get pushy about config settings it means they’re desperate for you to turn something on. You can taste the dank PM sweat dripping from the prompt that instead of saying ‘Yes’, ‘Later’, ‘Never ask me again’, says ‘Yes forever’, ‘Maybe later’. Sooner or later I’m going to slip and tap yes by accident and then some app will get microphone access on my phone.

Why am I not more pissed about this? I think because ‘maybe yes forever later’ isn’t a sign of G’s dominance or power over my life, it’s a sign that they’re afraid of losing it all and are employing dark patterns to hold on to me. And like most strategies conceived in desperation, it has a 50/50 chance of backfiring and driving people away.

The other shoe has dropped

This has gone beyond grabbiness. I had the ‘grabbiness’ moment in 2005, in my college dorm, the first time I saw a friend doing searches from a browser logged in to gmail. At the time I thought ‘why would any user allow this’, then ‘how can they in good conscience ask this of their users’, and finally a ‘what spendor it all coheres’ moment of understanding that G was successfully monetizing behavior data.

Since then I’ve been waiting for the other shoe to drop.

Now this has entered the realm where G’s much-touted ML arm can’t deliver usable products and they’re employing dark UX patterns intentionally and by accident. In Titanic terms, the water is entering the engine room.

My friends who 5 years ago wouldn’t care about consumer privacy still don’t, but they’re way more educated than they were – they can talk coherently about usability, filter bubbles, identity theft, negotiation risk, all the measurable impacts of a data-driven society dominated by free products built by data brokers.

They’ve learned, and that’s more dangerous than caring, because that means they’re rationally pricing these harms. The day that 20% of consumers put a price tag on privacy, freemium is over and privacy is back.

Appendix: more examples & ‘sounds good GIFs’

After this article got some views, my android device was creepily and probably coincidentally opted in to some next-level version of this:

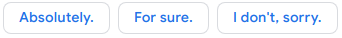

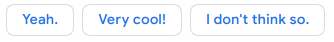

Also here are some more ‘smart reply’ examples from my inbox:

To a friend asking me to refer him an ops person:

To my mom asking an important question about taking classes:

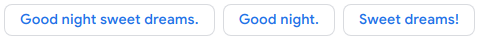

To my mom saying good night (i.e. ‘are you alive’):

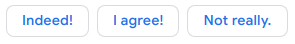

To a friend’s one-liner reply to an article I sent:

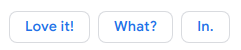

To a one-liner reply by my uncle to my dad on a family email chain:

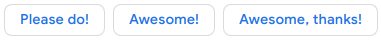

To a friend asking me to do a friend-of-a-friend a favor:

To me sending a list of news article URLs to myself from my work email account:

A few years ago I hooked up a chatbot to some work AIM account that we used for emergencies during the trading day, and went to the bathroom. When I came back everyone either was mad at me or thought I was mad at them.

This round feels like a nightmare where I open my mouth and valentine’s day hearts come out. BE MINE.